Introduction

Since acquiring a new Digital Single Lens Reflex (DSLR) camera in the Summer of 2007, I have collected many thousands of images. My camera is a Nikon D200 and I shoot the photographs in Nikon's NEF (RAW) format. Each NEF file is approximately 10MBytes in size.

A variety of software tools are able to convert the RAW images into the more usable JPEG format. For a variety of reasons I have chosen to perform most of these conversions using Nikon's Capture NX software. Unfortunately, Capture NX has very primitive support for my workflow and for Digital Asset Management (DAM) in general. Over a period of some time I evaluated a large number of other programs that appeared to address these requirements including:

Sadly, none of these appeared to meet my needs.

I discovered a very low level but powerful utility called Exiftool which provides comprehensive capabilities for reading and writing meta information to a wide variety of image file types. Exiftool works with the Perl programming language and by a stroke of good luck I happen to be a fairly proficient Perl programmer. I was therefore able to solve a few of my problems with some command line Perl scripts and Exiftool.

Command line tools are very effective for working with batches of files. But less effective at working with individual files and with images. I continued to be frustrated at the lack of a Graphical User Interface to these tools. Building my own interface using conventional software development techniques (e.g. C++) would be prohibitively time consuming.

After a period of reflection I postulated that it might be feasible to build something of reasonable utility using a web browser to provide the front-end user interface. Some quick and dirty prototypes appeared to confirm the hypothesis. I then embarked upon several days of development to build an implementation that would be sufficiently robust for me to use in "production".

Astute readers will say... but web browsers can't render NEF files. This is absolutely correct; they can't. However, NEF files contain one or more JPEG versions of the image embedded inside them. A Perl CGI script accepts the request from the browser and uses Exiftool to extract one of the JPEG images. Exiftool can also return the camera orientation data from the EXIF data and hence the Perl script can automatically rotate the image into the correct orientation. This lossless rotation is performed by the jpegtran program. Finally, the Perl CGI sends the resulting JPEG to the browser. This sounds like it may be rather slow. It isn't. As best as I can tell, most of the 0.25-0.5 seconds required to get the image on the screen are attributable to the browser's rendering engine.

Implementation

The remainder of this document describes the toolset that I built, mainly in terms of how I am using it. Let us assume that I have been out for a day trip with my family and take (typically) 100-200 photographs. I usually carry a GPS data logger with me. This device contains a GPS receiver that stores the precise coordinates of where it has been.

On my return home, I will:

- Download the 100-200 NEF images from the camera to my computer.

- Download the data from the GPS data logger to my computer.

Next, I may briefly review the images with a fast viewer (I prefer Irfanview) and delete any very poor quality images (i.e. duds) that are not worth saving.

Now my home grown Digital Asset Management system comes into play. I will edit a few lines of a configuration file and run a command line program to register all of the images into the system.

The registration script will:

- Process each file

- Optionally process the GPS data and correlate the camera time with the GPS time to figure out exactly where each picture was taken. This part of the process uses a Perl program adapted from the open source package gpsPhoto.

- Optionally, create a KML file. This file can be used to drive the Google Earth software which provides an interactive map with a "pin" inserted for each picture.

- Create a small 200 pixel by 200 pixel thumbnail of each image in JPEG format. This becomes very useful later for rapidly browsing large numbers of images. The thumbnails are made using the ImageMagick software.

- Optionally add some IPTC metadata to each NEF image.

- Register the image, the EXIF data and IPTC data in the Digital Asset Management system database.

NOTE: at this point, all of the images within a single batch are tagged with the same IPTC data values (caption, keywords etc.).

Next, I will most likely fire up Capture NX to create a high quality conversion of each RAW image to JPEG format. This I also run as a batch process although that does not preclude the possibility of going back and making manual adjustments to individual images.

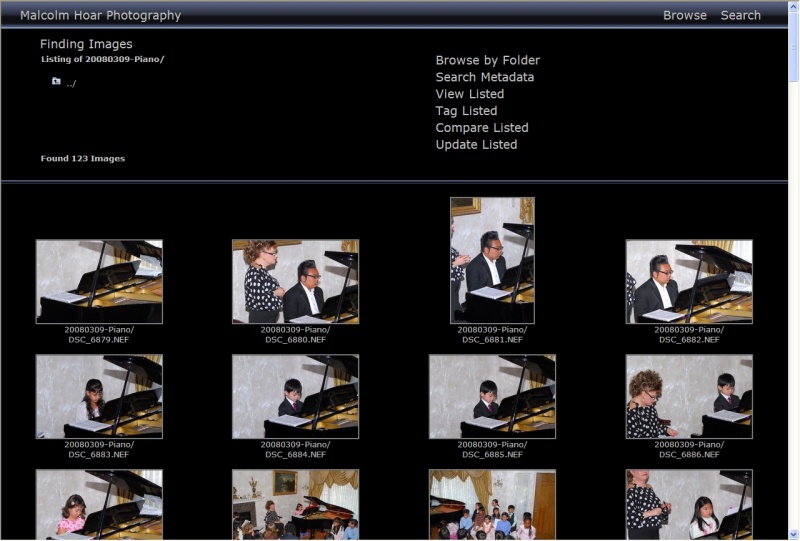

Most of this Digital Asset Management system (other than the registration script) is accessed via a web browser. Hence I am running the Apache web server software on my system to service those requests and execute the Perl CGI scripts. Once the images have been registered into the Digital Asset Management System I can point my browser at the system and browse the directory structure. The current software makes extensive use of JavaScript but it appears to work well with Microsoft Internet Explorer, Mozilla Firefox and Apple Safari. Here we see the contents of a folder called 2008030-Piano containing the photographs taken at my daughters recent piano recital:

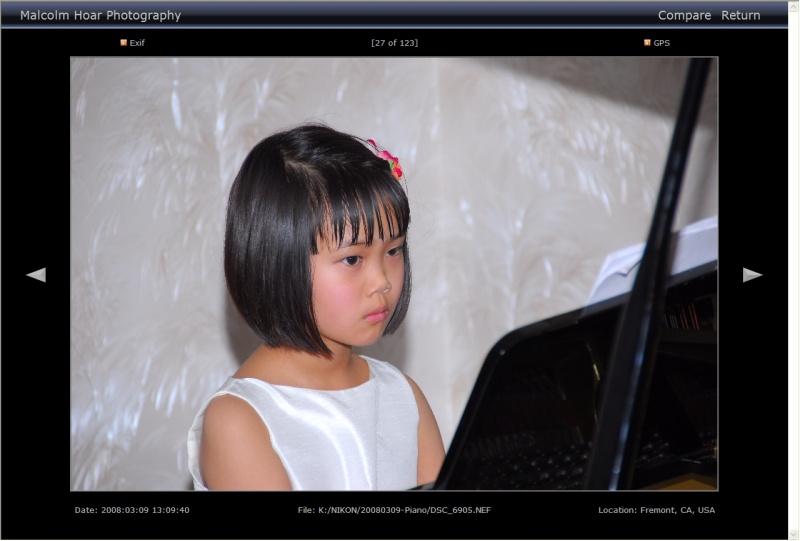

Clicking on a thumbnail takes me to an image review screen. I can move forwards to the next image or back to the previous one by clicking the arrows on either side of the image. Or I can return to the thumbnail overview of the current selection.

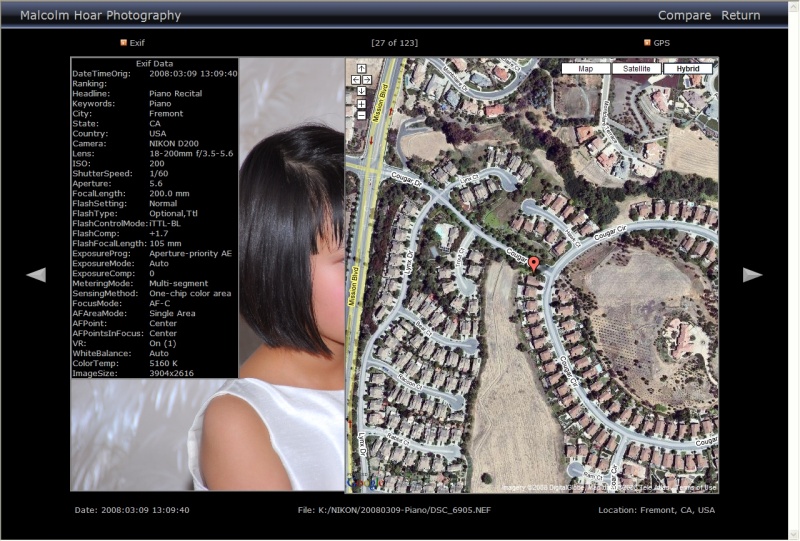

Mousing over the EXIF or GPS icons pops up additional information. The GPS coordinates are stored in EXIF data fields of the image. The map display is generated within the browser using the Google Maps API:

I also have an image comparison page where I can scroll two pointers forwards and backwards through a list of images. Mousing over one thumbnail will bring that image to the top. This is a useful tool for selecting the best from several similar images:

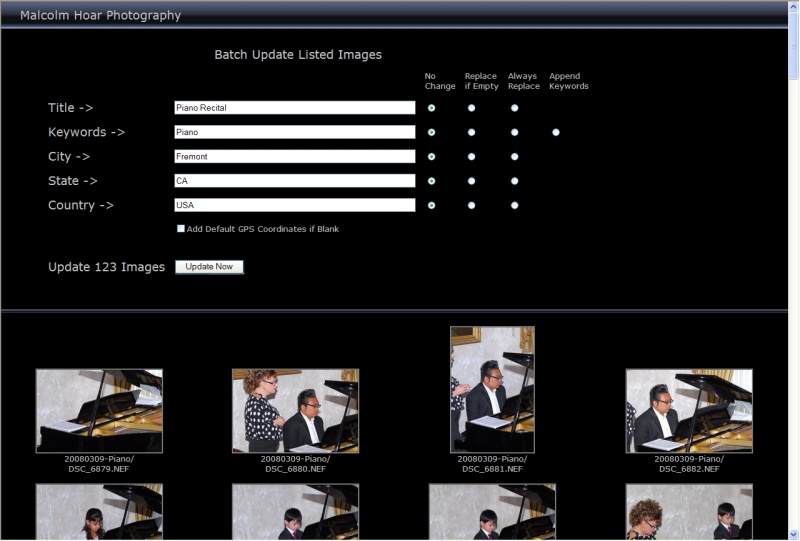

Next I have a screen that allows us to update the metadata in a preselected batch of images. This includes options to update only those data fields that are currently empty, or to update them unconditionally. In the case of IPTC keywords, there is an option to append new keyword(s) to the existing keyword values. If no GPS data is present I have an option to insert the coordinates of a predefined location. Hence when taking pictures at home (without the GPS datalogger) the system can simply add the coordinates of my house.

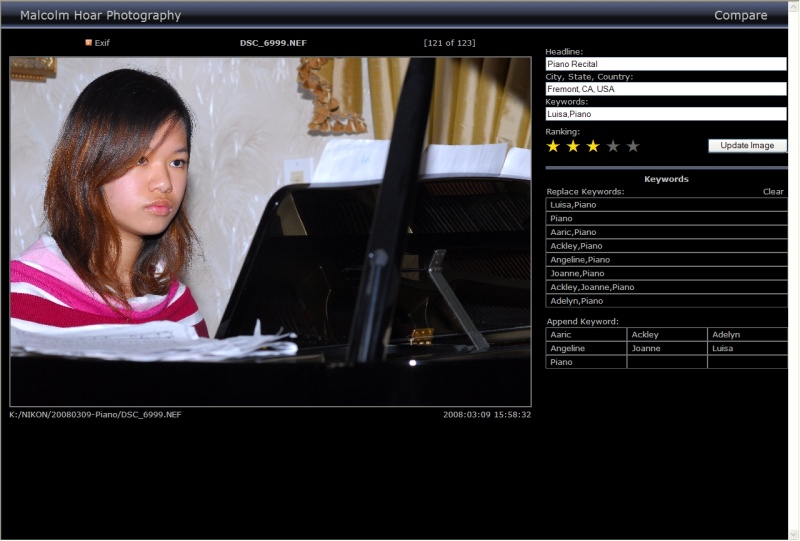

Most importantly (for me) the system will guide me through a preselected list of images so I can rank and tag them individually. The system displays the image and file name in addition to recently used keywords and keyword combinations -- clicking these will update the keyword settings for the current image. The system will also de-duplicate the keywords, normalize the capitalization, and sort them alphabetically. I can rank individual images by clicking on the appropriate "star". When I am satisfied with my selections I press the submit button. The system will update the metadata stored within the image and within the database. And then it will automatically display the next image for tagging. In total, each transaction consumes about 0.5 seconds of elapsed time, which I have found to be perfectly acceptable. Because the recently used keywords and keyword combinations are immediately available, I can tag most images in a few seconds with just 4 or 5 mouse clicks.

Here's a more interactive example of the tagging screen:

Interactive example. Try clicking on the keywords to see how this works.

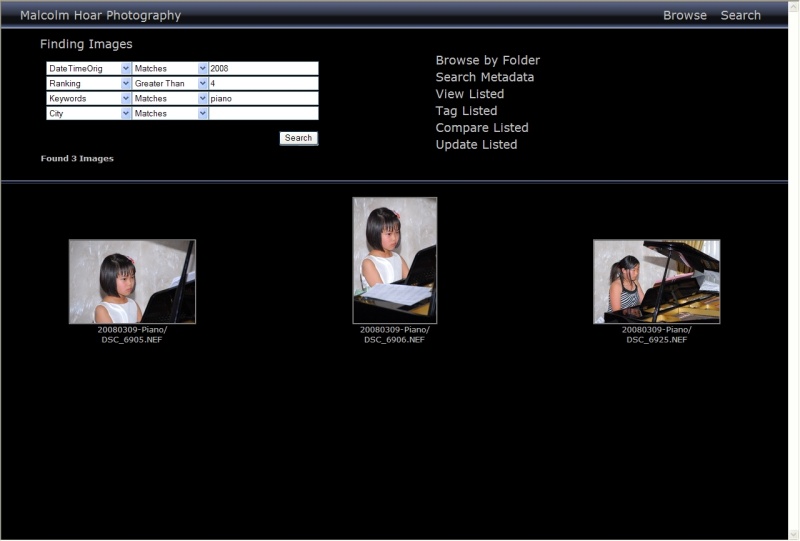

Finally, since all of these metadata are stored in a database, I have comprehensive search capabilities. The database is in reality a simple text file with fixed length 1024 byte records. This is very easily parsed and searched using Perl. Few of my images involve more than 500-600 bytes of metadata and the 1024 byte limit on the record size does not appear to be cause for any concern. In any event, it could easily be increased. The fixed length records enable the system to rapidly navigate the database via pointers and provides excellent performance. Hence the system can index around 8000 images in the disk space required to store a single NEF image (approx. 10 MBytes). In its current form, the database is almost certainly scalable to some hundred of thousands of images. With a very modest amount of work it could be made an order of magnitude more scalable. Most of the (useful) EXIF and IPTC data is searchable.

Issues and Next Steps

The existing code was hacked together quickly and informally. It's not highly modular or extensible and some personal conventions and preferences are hard coded. More comprehensive error checking is also needed. Thus the software is not currently in a suitable state to be deployed on computers other than my own.

The browser paradigm does create a few issues. In particular, inappropriate use of the browser's "Back" button in association with browser caching can cause some problems (inconvenient rather than fatal). This can probably be resolved although I have not yet formulated a generalized solution.

The system is capable of performing various actions upon a selected list of images. Those selections can be made by folder browsing or metadata searches. However, it is not currently possible to manually select a sub-set of a folder. Or a sub-set of the list that results from a search. Web browsers don't inherently support the shift-click and control-click methods of making multiple selections. My existing code could easily be enhanced to display a series of thumbnail images with a check box adjacent to each one. However, I feel making multiple selections via a potentially large number of checkboxes would be tedious at best. A better solution is needed. I would welcome any ideas or suggestions; how can you quickly and easily select multiple images from a large number of thumbnails displayed in a browser window?

The software works very nicely alongside Capture NX. It would be nice if it worked with Adobe Lightroom too. As it happens, Lightroom uses the open source database management system, SQLite, and furthermore, a Perl to SQLite interface is available via the Perl module DBD-SQLite. Therefore, it would interesting to explore the possibility of interfacing my software with Lightroom and its database of metadata. This is something I intend to pursue when time permits.

I am not willing to publish or share the code at this point; it's simply not in an appropriate state. I would like to do so in the future. However, professional and personal commitments (including three children) mean this may not be possible for some time. Perhaps never. But I have shared the concepts and I am willing to entertain questions and suggestions from seriously interested parties. I hope you'll understand and respect my decisions in this regard.

Contact malch at malch dot com